Keywords 101: Difficulty, Volume, and Segmentation

More content today, in keeping with a cadence that one can only describe as “halting, at best.” But, in spite of my failings, on we go with the SEO for non-scumbags series.

Defining the Important Terms

Last time out, I introduced the idea of keywords, focusing on searcher psychology as the foundation. In this installment, I’m going to get more into the nitty-gritty of keyword selection.

But before doing that, let’s define some terms of art. And I mean really define them, rather than just saying that “difficulty” describes how hard it is to rank for a keyword.

Keyword Volume

I’m listing these concepts in order of ease of understanding, from easiest to hardest. So, let’s start with a softball in the form of keyword “volume.”

Keyword volume is simply the approximate number of times per month that somebody types this keyword into the search engine and examines the results.

I say approximate because none of the tools you’ll use will know the exact number. And, even if they did, they’d know historical numbers and, obviously, not be able to predict the future. As for why “per month” is the standard denominator convention for this, I must confess, dear reader, I do not rightfully know.

Mystery conventions and approximation aside, searches per month does give you a sense for the traffic potential to your site, should you write content targeting this keyword. (Recall from the last post, “targeting the keyword” really means answering the question that you deduce the searcher is asking with this keyword.)

As you brainstorm keywords, I definitely suggest tracking them and their stats with a spreadsheet. When you do this, you’ll acquire enough data to view the keyword volumes in relative terms which, I would argue, is more important than the actual number, per se. In other words, the value in recording keyword volume comes less form the actual number and more from having a sense of “high volume” and “low volume” keywords.

Domain Authority

Interestingly, I think domain authority is a little easier to understand than keyword difficulty. And I say this with full knowledge that many of you reading probably can infer what keyword difficulty means and are simultaneously thinking, “what on Earth is domain authority?” Well, domain authority is a very straightforward concept, and your preconception of difficulty may complicate things in any case.

Domain authority is essentially an answer to the question, “how much does the search engine trust your website?”

Obviously there’s a bit more nuance than that, but this is the overarching gist. Domain authority is a proprietary Moz metric (others, like ahrefs have similar measures, like “Domain Rating), and it measures your website’s cachet with the search engine on a logarithmic scale from 0 to 100.

If you’re wondering how it’s calculated, as I said, it’s proprietary. They mention using many factors, but generally some of the most prominent ones involve the number of links to your domain (which reinforces the scumbag SEO’s motivation to spam you begging for links).

For our purposes here, the main importance of domain authority when picking keywords is that it informs how aggressively you can pursue “difficult” keywords.

Keyword Difficulty

Speaking of difficult keywords, let’s define keyword difficulty. The difficulty shortens the full, proper descriptor “difficulty for you to rank.”

Keyword difficulty is a measure of how hard you will find it to rank for a given keyword.

Now I imagine you’re mentally calling me a liar for saying that this is harder to understand than domain authority, but bear with me. There’s some subtlety at play.

First of all, the term “keyword difficulty” is a bit reductive in that it focuses on the keyword itself, rather than the results. Generally speaking, a “difficult” keyword is one where answers exist in the search engine results page (SERP) that searchers (and other sites, via linking) really appreciate and consider canonical.

So, rather than think in terms of “difficult keyword” I’d think in terms of “well answered question” (or the inverse, of “easy keyword” and “poorly answered question”).

And secondly, it’s easy to conflate an “easy” score with “I should target this,” when other, hidden factors make a keyword hard. For instance, if you write tutorials about AWS, the scores of those keywords tend toward the easy side, in spite of that fact that you’re competing with Amazon itself for most of them, imposing a huge drag factor on the value of those terms.

Amazon will always outrank you.

Generally speaking, vendors that offer difficulty scores use the same proprietary, often link-based metrics to approximate. This makes them great in aggregate, but imperfect in the small. They (understandably) don’t account for all factors that might make your contribution an unwelcome addition to an already well-established SERP.

Keyword Audience Segmentation

The last consideration is definitely the hardest to articulate. It’s also one that rarely makes it into the keyword research consideration. Let’s talk about audience segmentation with keywords.

Keyword audience segmentation is basically answering the question “what do we know about the person executing this search?”

I’ll have an easier time making my point here with a couple of examples.

Consider first the keyword “devops,” and ask what you know about the person searching for it. The answer is, “probably not much.”

It could be a devops engineer looking for a formal definition of the term for reference. Or, it could be a lawyer seeing the term in a deposition transcript and wondering what it means. Or, really, anything in between or anything in general.

You know nothing about this searcher. It segments the audience terribly.

Now ask yourself what you know about someone searching “c# closure performance.” What do you know about this searcher?

Well, a lot. This searcher is almost certainly an intermediate to advanced .NET developer, concerned with application performance.

Why does this matter?

Well, it helps answer the question of how to speak to the searcher with your article and how to continue the conversation with them in a nurture capacity. It’s much, much better from a content marketing perspective to know exactly who you’re talking to.

So you want to favor searches that segment the audience as much as possible (to your target segment).

Measuring the Variables

Now that we’ve defined these terms, let’s talk a little about how to quantify and measure them.

Keyword Volume

First up, let’s talk volume. To measure volume, I personally use a tool called Keywords Everywhere (KE).

If you look back at my screenshot where I defined keyword volume, this tool’s Chrome plugin is what shows the search volume for the keyword as a heads-up number in my searches. That’s particularly handy, since it lets me see search volumes in real time, as I use the search engine.

It also has functionality where it lets you lookup keyword volumes in bulk, export them, and generally work with them:

This is a paid tool, but the cost is comically trivial. You get something like a 100K keyword lookups for $10 or something (check actual pricing here, if you like).

If you’re wondering where they get their search volume, it’s directly from the source — Google. Though, bear in mind that Google’s published data “buckets” the searches, rather than counting them with a ton of precision. So, while this is a reasonable approximation, it is still an approximation.

Another thing to understand about KE and all tools like it is that they don’t like to say “I don’t know” when you look up a keyword.

So when they don’t know, they say “0.”

All of these tools work by having very, very large caches of keywords and periodically updating their volume stats. But they don’t have, in their cache, every combination of words in human existence. So in the event of a cache miss, they just say “0.”

This results in tons of false negatives. Bear that in mind as you do your research, and understand that if Google auto-completes a search for you, it has search volume, whatever the tools tell you.

Domain Authority

For measuring domain authority, you could use Moz’s “free” domain analysis tool. That makes sense, since domain authority is a proprietary Moz metric. But you only get something like 10 lookups per month for free.

So, I actually use Keywords Everywhere again for this. Check it out.

KE shows you this in heads-up fashion, just as they do with other stats, like volume. So rather than pay Moz to look it up or limit yourself to 10 lookups per month, I’d just google the domain you’re interested in and let KE show you.

Keyword Difficulty

For measuring keyword difficulty, I use ahrefs. This is admittedly not a cheap tool, and I have access to it because Hit Subscribe has an expensive subscription with multiple seat licenses. I think the market rate for ahrefs or any of its competitors (Moz, SEMRush, etc) starts at around $100 per month.

But, truth be told, it really doesn’t matter too much which you use for this purpose, and good on you if you find a cheaper alternative. The practical application is the same regardless: find a quick and dirty way to score keywords to understand their relative attainability.

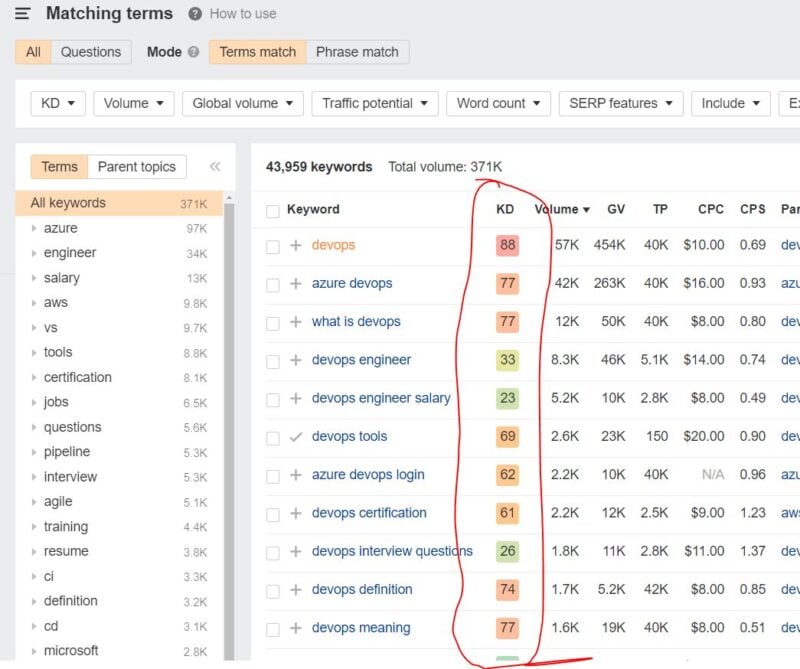

What I’ve done above is searched the term “devops” and asked ahrefs for child keywords (keywords containing the term “devops”). At a glance, I now have a relatively good working understanding of the relative difficulty of various flavors of questions around devops. For instance, it looks like you’d have a much easier time ranking with content addressing people interested in interviewing for DevOps roles than people wanting a definition of the term.

I should mention here that this reductive number is only good for a quick idea.

Before targeting a term and putting actual economic skin in the game, you should always inspect the SERP to see if the difficulty score makes sense. (I may include a section on manually evaluating difficulty in the advanced topics post, but for now here’s a video I recorded about this a few years ago).

Keyword Segmentation

Last up, for completeness sake, is keyword segmentation. And there’s really no good automated way to measure this that I’m aware of. I’m sure if you create one, SEOs everywhere will pay you handsomely.

The best way to “measure” keyword segmentation that I can think of is to enlist an SME about the topic in question and ask that person “what percentage of people who search this term are likely members of our target audience?”

I’m not being facetious here.

This rudimentary exercise would put you ahead of the vast majority of keyword researchers, including professional SEOs. After all, how many of them do you think know, offhand, what the search “C# closure performance” says about a searcher?

In your world, this could just mean a simple exercise of asking yourself whether this is a search your target persona would actually execute.

Actually Picking Keywords

Alright, so now we’ve got the variables and how to measure them squared away. Let’s take a look at how you put this together to actually select keywords. And I’m going to try to offer more helpful advice than just “go for low difficulty, high volume, well-segmented keywords.”

Start with Rough Segmentation, Then Either Volume or Traffic

In a nod to combinatorics and high order language complexity, there are more potential keywords than there are atoms in the universe. And, even culling that to just things that make comprehensible sense in English, you still have a pretty daunting task in front of you if you don’t start somewhere.

So I’d suggest starting with some semblance of topical relevance to your audience, and then look for large batches of keywords that are either easy or that have volume. You can filter from there.

For a concrete example, look at the screenshot of ahrefs above. I could type in “devops” and then do a difficulty filter, setting an upper bound of 20 on results. This would give me a large list (that I could export to a spreadsheet) of easy terms related to DevOps and I could then prioritize based on volume and precision of segmentation.

Likewise, I could do a similar search with ahrefs, setting a minimum bound on traffic. I could then take that list and prioritize it by ease of ranking and precision of segmentation.

However you decide to proceed, I suggest starting with keywords that you know score well with at least one of the variables in question, and then filter from there.

Do Projected, Segmented Traffic Modeling

For a long time, I reasoned about keywords with the vague idea of “maximize volume, minimize difficulty, make it relevant.” And, that’s fine. We did achieve good traffic results even with that fairly simplistic outlook.

But we really started to hit a lot of hockey-stick-traffic-graph shaped home-runs when we began to model and project well-qualified traffic. Here’s what that looks like.

This is a list of response keywords (a topic for the upcoming 201 post), along with their measured volume and difficulty, for a community site that Hit Subscribe owns and runs as a kind of content lab and community resource.

We measure difficulty, volume, and domain authority (not pictured) as I’ve described above. From there, we use difficulty and the site’s domain authority in an equation that we created based on historical data to create a projection for where we think the site would rank for the keyword. Then, based on that ranking, average click-through rates for SERP positions, and volume, we project traffic to the site for the parent keyword.

As I often tell clients, this isn’t going to win us a Fields Medal, but it helps with keyword prioritization far better than some kind of composite metric that SEO firms and tools give you like “keyword priority.” And as we’ve tuned it over the last year or so, it’s reasonably accurate.

Yours, however, doesn’t necessarily need to be.

I’d suggest just doing something crude here to help yourself model traffic. Maybe as simple as knocking yourself down a position in ranking for every 5 difficulty points and reducing traffic by 50% for positions 1-4, 75% for 5-10 and call it 0 for anything above 10.

Seriously, it can be that crude, and it will still exceed what most others are doing. It also gives you an excellent way to sort and prioritize which posts to write, all else being equal.

(Let me know if you’re interested in more about the equations we use — I could always add some methodology to the advanced topics post)

Re-Filter through the Lens of Precise Segmentation

Once you’ve gone through this sequence of steps, I’d suggest one last segmentation sanity check.

If you’re feeling ambitious, you could always assign a percentage of searchers you think represent your target persona, and further refine your traffic projection. But I’d suggest a more binary approach.

Ask yourself if you think a decent chunk of, or the majority of searchers will hail from your target segment.

If the answer is “yes,” then game on. Otherwise, perhaps deprioritize or reconsider targeting this term.

Game Theory: Focus on the Big Picture

I’d like to close this installment of the series by circling back to a theme of the second post: game theory.

I’ve talked specifically about how to take keywords that you brainstorm, and then filter and prioritize them based on important variables. Practically speaking, this will result in a force-ranked list of keywords to target (really: questions to answer).

But people learning this process (and clients, quite often) can get WAY too far into the weeds at this point. This is the point at which you have to resist the impulse to treat each keyword and piece of content addressing it as a project. This is the point at which you have to resist the siren-song of BS SEO-minutiae about which heading to use where and how often to use each keyword and blah-blah-blah-blah-blah.

Instead, pull back and recognize your list of keywords for what it is.

It is a sequence of bets that you’re about to place.

You’re going to commission or create 50 posts. You’ll rank 1st for maybe 5 of them, top 2-4 for maybe 25 of them, 5-10 for maybe 10 of them, 11-20 for another 5, and then just faceplant for the last 5. That’s the nature of the game, and that will be true no matter how much you fuss and agonize over inconsequential details.

You can, of course, influence the specifics of those buckets (e.g. by going after SUPER low difficulty keywords), but the chance element will remain. So embrace it.

When you do SEO right, you’re playing a game like blackjack, but where you’re the house instead of a sucker player. And like the house, you’re not going to bother to obsess over why you didn’t win a particular hand — hey, that happens. You’re going to instead focus on rigging the entire game in your favor and then playing enough hands that you always come out ahead in the end.

@themolitor

@themolitor @dert

@dert